Why Letting Agents Touch Money Is Dangerous

And How to Do It Anyway

February 5, 2026

This blog explores why letting AI agents touch money is inevitable. Autonomous agents promise efficiency, scale, and better outcomes by acting continuously on our behalf. But delegating tasks is not the same as trusting an agent with financial control. This post is about what has to be true before that trust makes sense.

That shift is something I’ve experienced directly. Since April 2024, my use of AI agents has evolved from helper, to co-pilot, and only gradually into execution.

Read-only agents are prompt dashboards. Useful at synthesizing large amounts of information, but even that has limits: stale prices, missing protocol data, incomplete context. And most models that actually understand what I want stop exactly where things get interesting: execution.

But why didn't I choose to let an agent write this article? It wouldn't sound like me. It would reflect an opinion, not my opinion. The structure would be right, the voice would be off.

Now scale that problem to money: a wrong word is embarrassing; a wrong decimal is thousands gone in seconds.

If my agent could handle my finances, I wouldn't have to spend time watching markets or managing positions. It would monitor 24/7, create and execute strategies, rebalance risk, and act on my behalf.

This only works if I can decide how involved I want to be. Like with a banking advisor, delegation isn't binary. Of course I want my advisor to get me high returns, but some details are important, I do not want it at any cost.

The major pitfall is ambiguity. Before touching money, four things must be crystal clear:

• Defined authority: what it can do without asking

• Explicit boundaries: what it must never do

• Clear context: the state decisions depend on

• Intent: the outcome I actually want

Most agent systems start with intent and hope the rest sorts itself out. With money, especially on-chain, that’s backwards. Guessing wrong isn’t an error state, it’s a loss. Constraints must come before intent.

Two prompts don’t make a right - building a system that doesn't guess

Agents are good at parsing language. They’re bad at understanding what you meant.

Me: "Send half an FDUSD to Mani."

Balance: 1.55 FDUSD

Agent: 1.55 ÷ 2 = 0.775 FDUSD

Me: I meant exactly 0.5 FDUSD.

That’s not a bug. That’s the default failure mode when intent is ambiguous, the agent is autonomous and execution is irreversible.

I’ve fixed other AI problems with long prompts and memory. That doesn’t work here.

Prompts and memory only shape how the agent reasons. It doesn’t constrain what it can do. With money, the risk isn’t in the reasoning. It’s the execution.

A perfect prompt can still produce a perfectly wrong transaction. What architecture changes, is where ambiguity is allowed:

- Translate intent into bounded execution: vague instructions must become fully specified actions

- Enforce permissions and limits through policy: actions must fit inside predefined rules

- Be explicit about what is and isn’t allowed: if something can’t be expressed safely, it doesn’t execute

This isn’t theoretical. It’s why we implemented transfer-with-authorization for FDUSD using EIP-3009. When letting an agent move funds, we require it to construct an authorization with exact parameters: amount, recipient, validity window, and nonce. If any field is ambiguous, there is nothing to sign. The contract doesn’t infer intent; it only verifies whether the authorization matches exactly. Execution either happens as specified or not at all.

Agent extracts:

- Asset: FDUSD → approved asset

- Recipient: Mani → known wallet

- Amount: "half" → ambiguous

Policy checks:

- Are percentage transfers allowed?

- Is the balance source explicit?

- Are rounding rules defined?

❌ Ambiguity → blocked until clarified.

The agent didn’t fail. The system refused to guess.

On-Chain Money Doesn’t Forgive Mistakes

On-chain money is different because execution is settlement. Transactions run directly on a blockchain. No intermediaries. No delays. No rollback.

That’s what enables self-custody and programmable finance. It’s also why mistakes propagate instantly. No chargebacks. No buffer. In 2025 alone, hacks, scams, and exploits drained over $4 billion, including $1.37B from scams/phishing (up ~64.2% YoY source), driven largely by smart contract failures, unaudited protocols, and centralized breaches.

That’s the environment agents are being asked to operate in.The danger becomes exponential when an agent makes a judgment call it was never qualified to make.

Me: "Move my stables to the best yield."

Agent: Finds a 47% APY farm on BSC. Bridges my USDC, swaps to a wrapped token I've never heard of, deposits into an unaudited contract.

The yield was real for six hours before the rug pull.

I meant best risk-adjusted yield. The agent heard "highest." Highest is often a trap.

Better prompts don’t fix this. The agent needs explicit constraints: approved protocols only, max single-protocol exposure, minimum TVL thresholds, no unaudited contracts. Without those rails, optimization turns lethal.

Building a strict workflow where each step gates execution

The only approach we’ve found to work is strict separation. AWS’s Agentic AI Security Scoping Matrix (Nov 2025) calls this the safe baseline: Scope 1 (no agency) agents are read-only and can’t execute changes. Once you add agency (the ability to act), you need strict boundaries and controls—exactly what this workflow engine enforces.

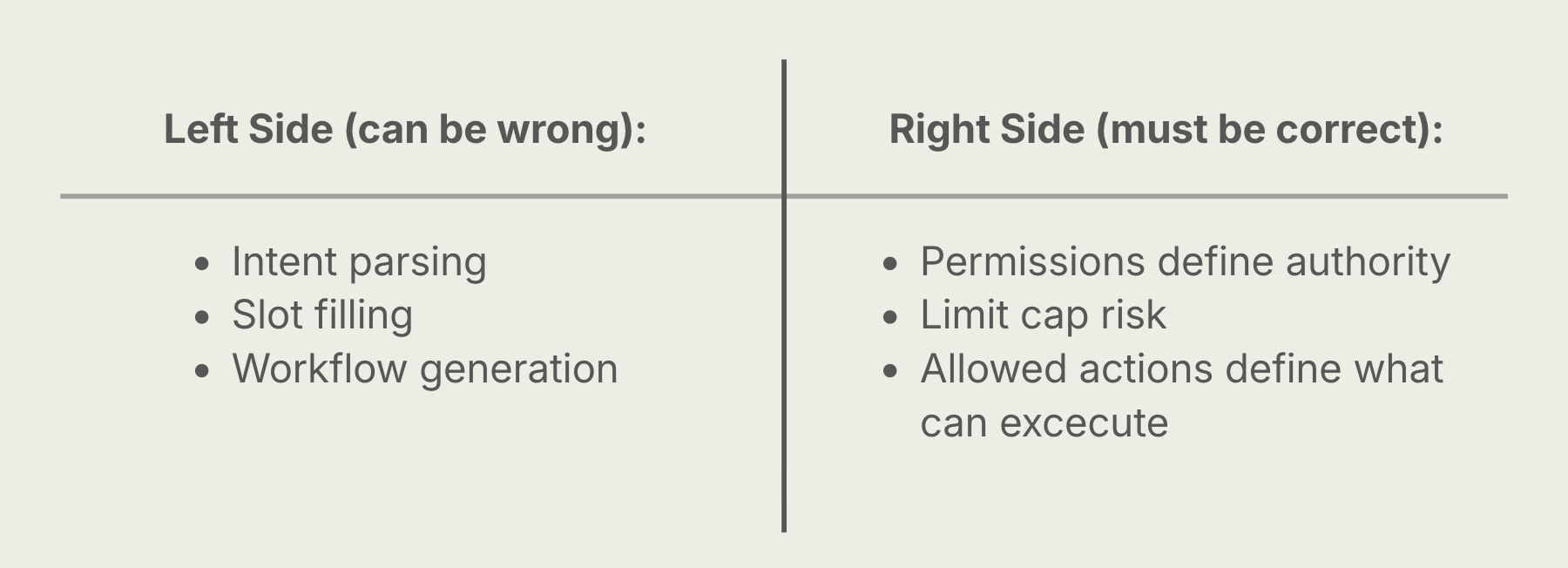

There are two sides to the system: understanding and execution.

Understanding is fuzzy. The agent parses language, fills gaps, and builds a plan. It will be wrong sometimes. That’s fine, because nothing here can move money.

Execution is strict. Every action is checked against policy. If it’s not explicitly allowed, it doesn’t run.

The workflow engine is the line between them. That’s where guessing stops.

Models don’t sign. Prompts don’t sign. Only hardened execution does.

You shouldn't do anything you don't understand — and neither should your agent. Let proper architecture guide you.

If you don’t give your agents safety rails, you’re being reckless.

Written by: Christian Nielsen - Head of Platform

Christian leads the Platform team at Finance District, focusing on building reliable, scalable systems that underpin product development and cross‑team initiatives.

To continue the conversation, join our Discord community, follow us on X, or explore our documentation to learn more.